Currently I am focused on co-optimizing the brain-inspired algorithm and hardware for energy-efficient novel computing systems, incluging synaptic transistors, inmemory computing, spiking neural networks and circuits.

My other present or part research topics includes embedded systems, robotics, computer vision, deep learning.

In this page, I provided more introductions and details about my current and past research topics and works. Some other interesting works that lacks research properties are presented in the Project page.

Brain-inspired algorithm and hardware

Artificial Intelligence (AI) based on Artificial Neural Network (ANN) is widely used to benefit our daily lives. However, it costs large amount of energy to train and inference the neural networks on the digital computers of Von Neumann architecture, which is a bottleneck in the development of AI. For instance, one single AlphaGo game requires $3000 in electricity bill. As a comparison, our brain costs only 20W and is able to complete more complex tasks than a computer. This inspired the researchers: Can we built the energy-efficient brain-like computers?

At the material and electronic device level, the synaptic devices such as memristor and synaptic transistor have been reported. In our brain, the computation and memory are in the same place, differ from the computers which have separate processors (CPUs) and memories (RAMs) and connected with the data bus. The neurons and synapses between them transmit and process the signals of our body, also the weights of the synaptic connections storage what we remembered and learned. A synaptic electronic device plays a similar role as our synapses: The conductance between two terminals of a synaptic device represents a weighted inter-neuron connection in a neural network. If a voltage of input number is applied on the two terminals, then the current flow through the terminals would be the numeric result of the input number and the weight. This performs the basic multiplication happened in ANN, just like an analog arithmetic unit. Also, the conductance of the device can be kept for long period, or adjusted by applying the programming pulses on it. This mechanism is called In-Memory Computing. With an array of synaptic devices, the matrix multiplication tasks can be done, which is the most energy-cost step for ANNs.

My detailed works in this topic are introduced as follows:

Simulation of electrical characteristics of synaptic transistors in software

Ref: github.com/neurosim/MLP_NeuroSim_V3.0

There are six types of non-ideal characteristics of syanptic devices shown in the Figure above. I modeled and simulated the non-linearity and limited percision these two properties in my software. In particular, for a specific current conductance level, my program will calcuate the conductance level of the next state, after one of the potential or despress pulses is applied to the gate terminal of the transistor.

Image classification using CNN based on synaptic transistor (simulation)

To further evaluate our synaptic devices when running AI applications, I integrated the above device simulation program into PyTorch. Each pair of devices represent a weight value in the architecture of the neural network. To update these weights replaced by the devices, for each iteration of the neural network training process, the program updates the conductance value by applying pulses based on the magnitudes of the gradients calculated in the back-propagation step. As a simulation, all status of the conductances, pulses are calcuated and storaged in my program, rather than running in a real hardware.

I implemented a CNN for Covid-19 classification consisting of ResNet-18 backbone for feature extraction and a fully-connected layer for classification. The accuracy based on devices with non-ideal properties of non-linearity and percision added reached 80%, while the accuracy in ideal case without device considerations is above 95%.

Image perception and classification based on opto-electric artificial synapse (simulation)

Our synaptic transistors with perovskite have the ability to response to the lights. Illumination briefly enhances the channel current of the transistor. This property enables the transistor to integrate the artificial retinal and synapse.

I firstly simulated by programming to obtain the image seen by the transistor array. I found that there is a property of our transistor when responding to the light that similar to the visual persistance of the human eye. For a motion object, the recorded area of image is blurred. Then the facial recognition control scenario is used to evaluate our device for AI applications. A CNN classifier is built up to distinguish between clear faces (stationary for half seconds) and blurred faces (passer-by). We reached over 90% of accuracy.

Novel algorithm for neuromorphic hardware

I am exploring and learning the brain-inspired algorithms, such as spiking neural networks, spike-time-dependent-plasticity (STDP) learning rules, and neuronal circuit policies (NCP). I am also trying to combine these novel algorithms with synaptic transistors and circuits.

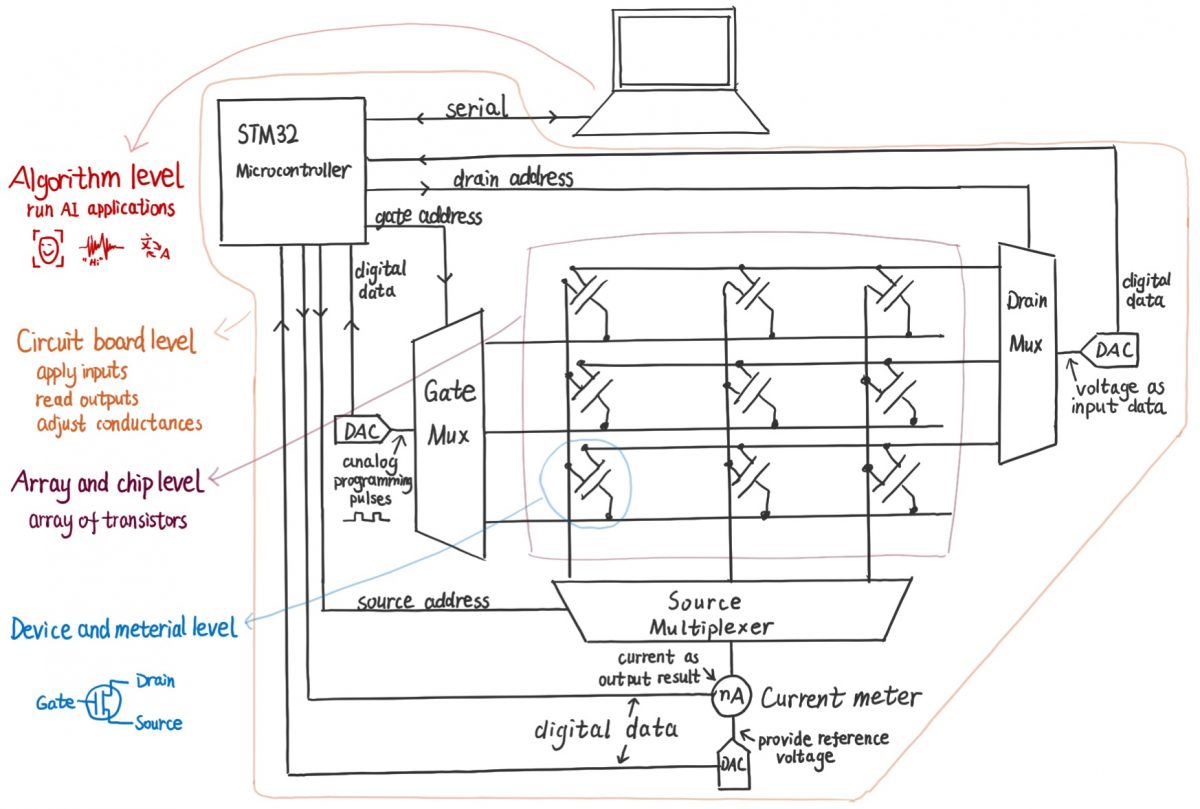

Hardware platform for synaptic transistor array

The previous research above reported the 3-terminal solution processed synaptic thin-film transistors, and AI allpications based on simulation. The channel conductance between Drain and Source terminal represents a weight in neural networks. The pulses applied on Gate terminal may update the conductance. To complete ANN applications on fully-hardware, currently I am developing a circuit board to apply the drain voltages as the input data, measure the output drain-source currents as the output, and apply the gate pulses to adjust the conductance of the transistors in the array. Also, a software to bridge the gap between the hardware and the AI algorithms needed to run AI on the hardware.

Algorithm optimization for deep learning on microcontroller

Keyword spotting (KWS) is beneficial for voice-based user interactions with low-power devices at the edge. The edge devices are usually always-on, so edge computing brings bandwidth savings and privacy protection. The devices typically have limited memory spaces, computational performances, power and costs, for example, Cortex-M based microcontrollers. The challenge is to meet the high computation and low-latency requirements of deep learning on these devices. I developed a small-footprint KWS system running on 216MHz Cortex-M7 microcontroller with 512KB static RAM. The selected CNN architecture has simplified number of operations to meet the constraint of edge devices. It generates classification results for each 37ms including real-time audio feature extraction part. I further evaluated the actual performance for different pruning and quantization methods on microcontroller, including different granularity of sparsity, skipping zero weights, weight-prioritized loop order, and SIMD instruction. The result shows that for microcontrollers, there are considerable challenges for accelerate unstructured pruned models, and the structured pruning is more friendly than unstructured pruning. The result also verified that the performance improvement for quantization and SIMD instruction.

[GitHub] [Arxiv]

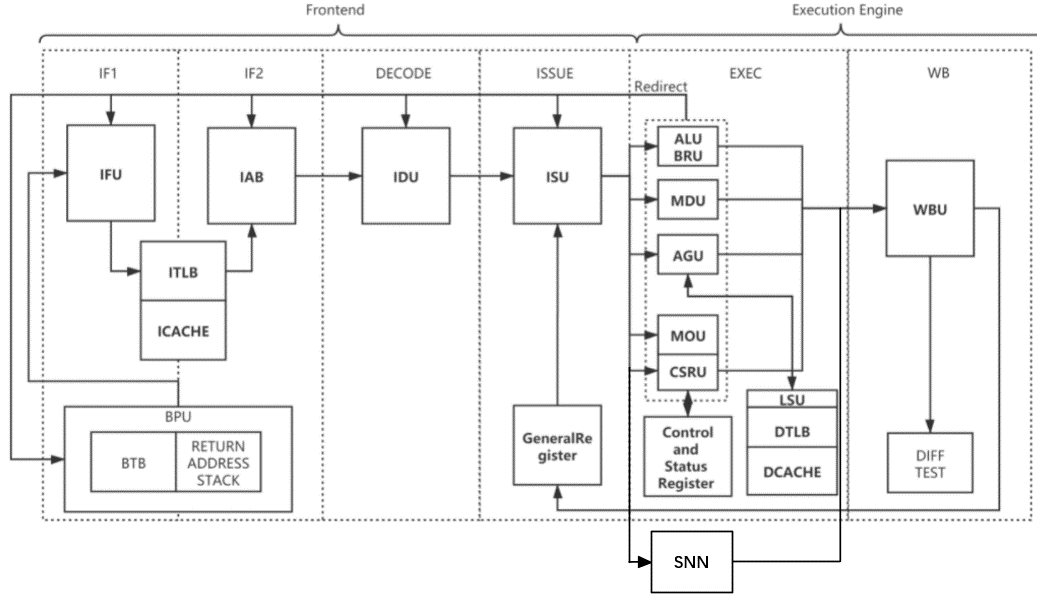

Brain-Inspired Processor and Applications by Expanding RISC-V Instruction Set

An initial generation open-source neuromorphic processor for accelerating spiking neural networks (SNN) based on Nutshell open-source processor was developed. It is based on extended RISC-V instuction set, and our arithmetic units (neuron models, gradient surrogate functions…) ware added to Nutshell to implement the extended instructions. It was named as Wenquxing-22A. See [Arxiv] [GitHub].

Currently I am developing low-power ECG recognition application based on this RISC-V neuromorphic processor. I am also tring to improve the performance of the processor.

Embedded System Design for Environmental and Energy Fields

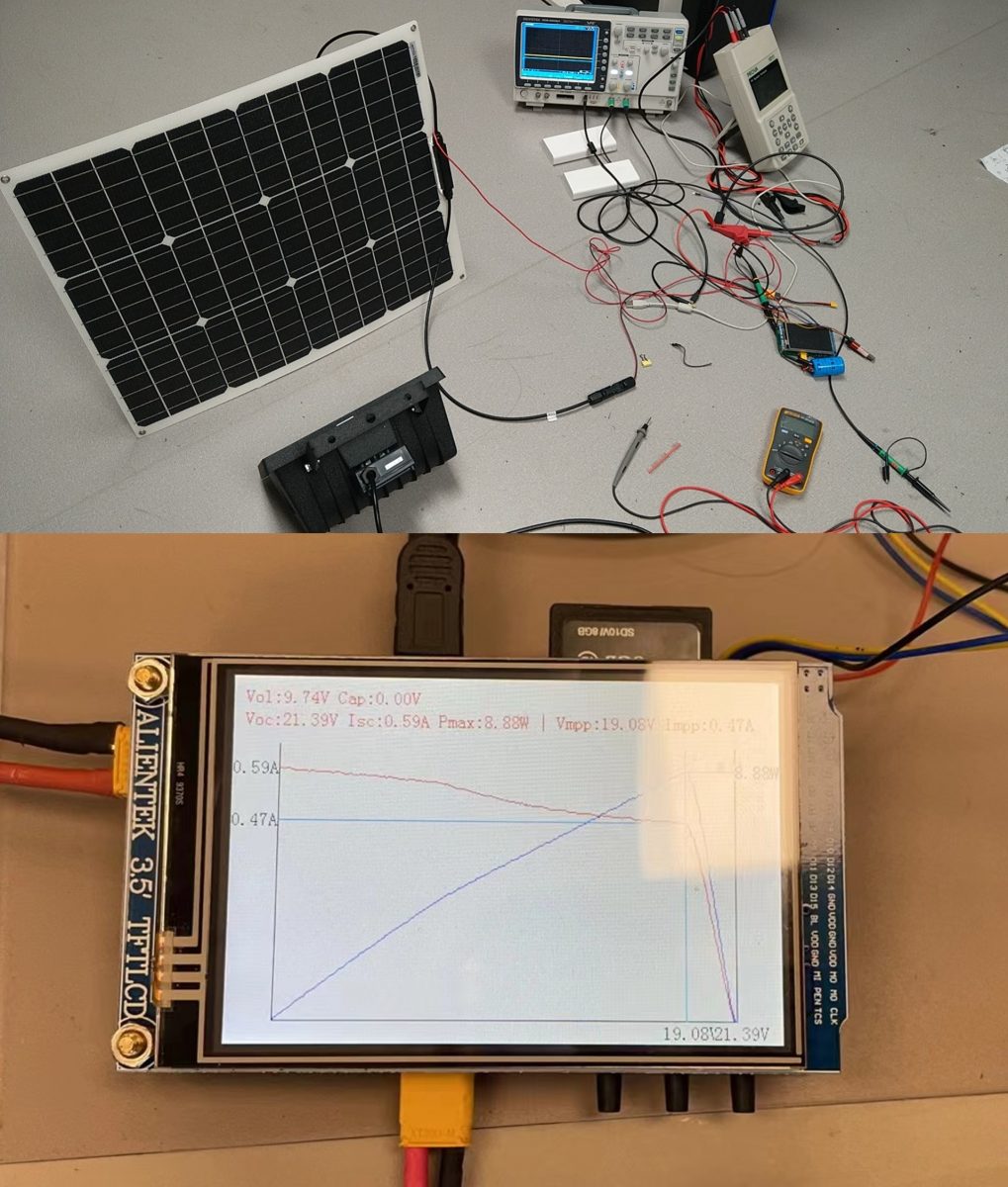

Photovoltaic maximum power point meter

To determine the voltage and current of the maximum power point of photovoltaic, we developed such a meter that measures the currents on the different voltages. The meter firstly discharge a super capacitor to 0V, then connect the PV to che capacitor, so voltage of PV increases from 0V to open-circuit voltage. Finally the meter displays the I-V and P-V curves on screen, labels the max-power point, and save the I-t and V-t raw datas to the SD card.

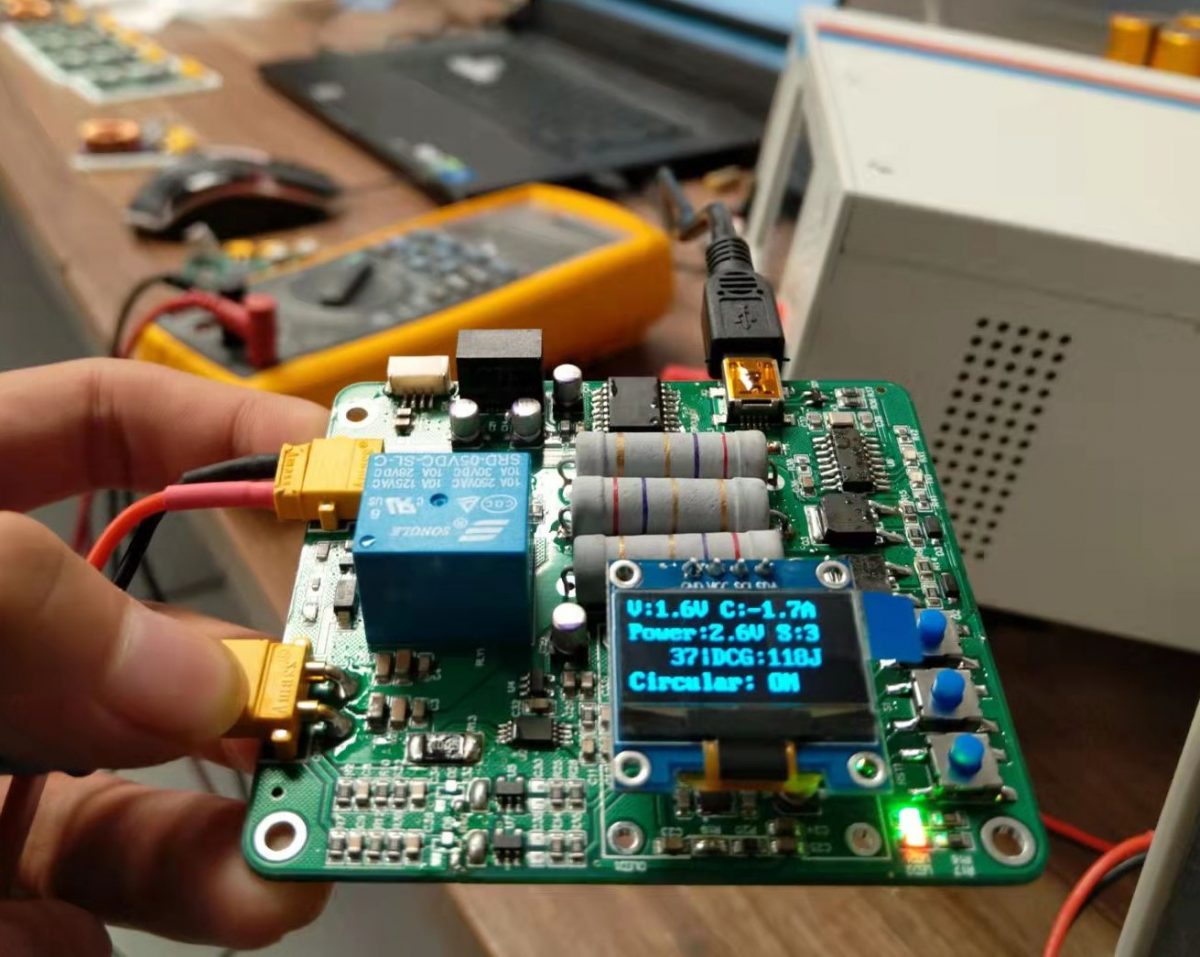

Cycle life tester for super capacitor

To predict the super capacitor’s life, and predict the performance during the life cycle, this tester measures the life of supercapacitor by charging and discharging repeatedly. It displays the message such as voltage, current on OLED, prints via serial, and writes to flash.

Photovoltaic sun-chasing platform

We developed a 3-degree-of-freedom PV sun-chasing platform. It is driven by 3 brushless motors connect to screw structures to slow down and increase the force. I worked for the embedded software part for motor controlling.

Digital Twin based on Unity for Photovoltaic Systems Simulation

An algorithm based on Unity engine to accurately calculate the amount of light received by the PV panels on the building throughout the year taking into account the geographic location, the angle of the PV panels and shading from self and other buildings.